On April 18, the Kinding Law Firm Overseas Expansion Team hosted a live stream sharing practical insights on expanding games into the U.S. market. The session primarily covered compliance practices for protecting minors in the U.S., including relevant regulations, COPPA compliance cases related to game oversight, and hands-on experience addressing COPPA compliance requirements. Two attorneys engaged in a dialogue-style discussion, sharing content tailored to specific entities and answering audience questions about U.S. compliance during the live stream. Below is a recap of key points from this practical sharing session.

PART 1

Key Provisions of COPPA

COPPA is a U.S. law specifically designed to protect the privacy rights of children under 13. It requires operators to obtain verifiable parental consent before collecting children's information and imposes strict restrictions on data processing activities. Key provisions related to the protection of minors include:

1. Requiring verifiable consent from the minor themselves or their parents (for children);

2. Prohibition on storing or transferring children's information outside the U.S. without notification;

3. Granting parents the right to delete or correct children's information;

4. Prohibition on targeted advertising to children and minors.

Key Considerations: COPPA applicability issues—how to determine if a product/service targets children as part of its user base?

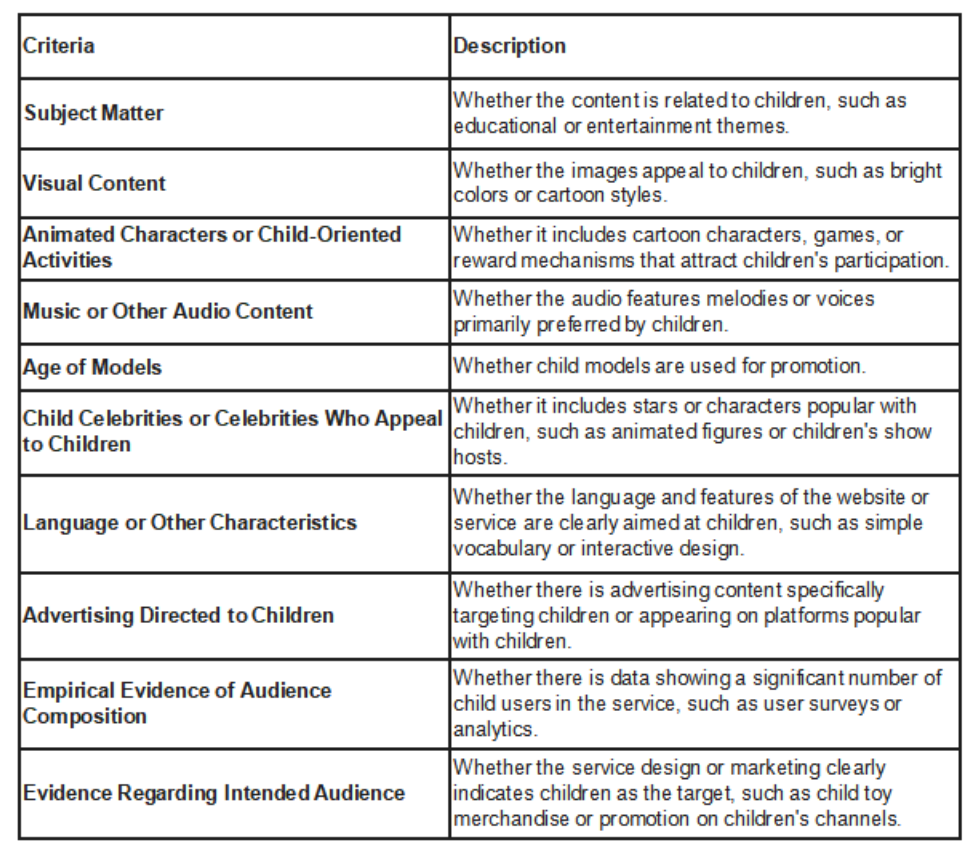

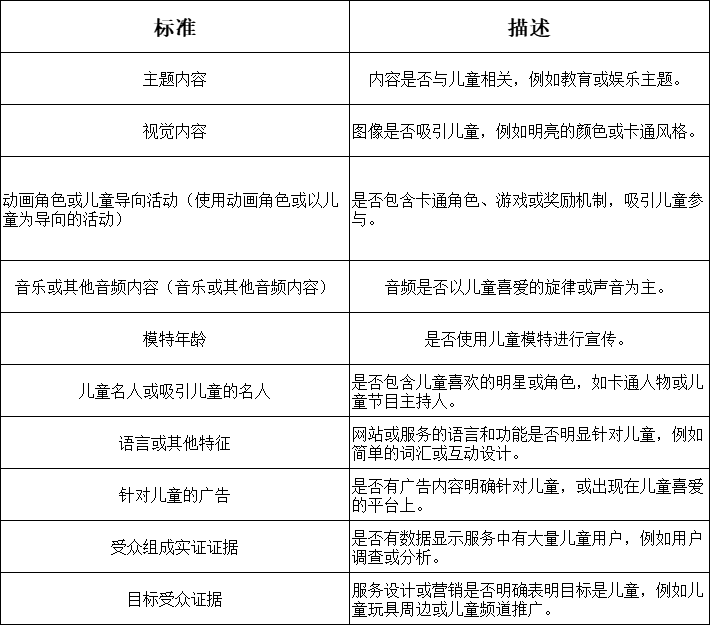

Criteria for determining whether internet products/services target minors include:

- Age of promotional models, celebrities, or KOLs featured

- Marketing materials associated with the product/service

Additionally, third-party evidence—such as audience composition data and target audience evidence—will be used to assess minor involvement. Detailed evaluation criteria and descriptions are outlined in the table below:

PART 2

PART 2

Analysis of Typical COPPA Cases

This section focuses on two landmark gaming industry cases led by the FTC involving Fortnite and Genshin Impact. Key takeaways from both cases are summarized below:

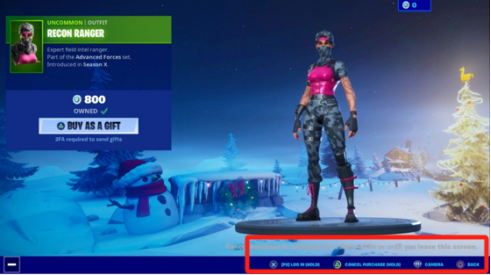

Age Verification Mechanism Deficiencies:

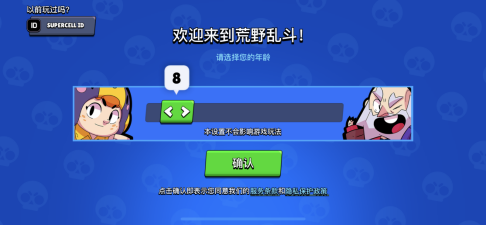

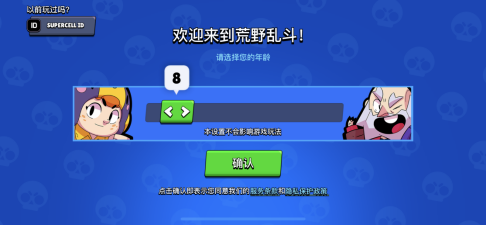

Relying solely on user-reported age (e.g., Genshin Impact's “12+ declaration”) is insufficient for liability exemption. Age screening must be conducted in a neutral manner—without default age settings or incentives for visitors to falsify age information. FTC guidelines further require companies to proactively identify children through technical means (e.g., third-party verification tools) or behavioral analysis (e.g., playtime duration, spending patterns).

Obligations for Mixed-Audience Games:

If game design (e.g., cartoon art style) or marketing activities (e.g., collaborations with child KOLs) may attract children, COPPA's highest standards apply even if the target audience is teenagers. The definition of “mixed audience” is refined, adding new factors for assessing mixed-audience products, such as marketing or promotional materials and plans, statements to consumers or third parties, user or third-party reviews, and the age of users on similar websites or services.

Transparent Probability and Pricing:

Dynamically display cumulative spending amounts on gacha interfaces (e.g., “Spent: $50”) and provide a “probability calculator” tool to help players estimate costs.

Anti-Predatory Design:

Implement a cooling-off period mechanism: Require secondary confirmation for single transactions exceeding a specific threshold (e.g., $100), or grant guardians the ability to set weekly spending limits for minors within parental control features.

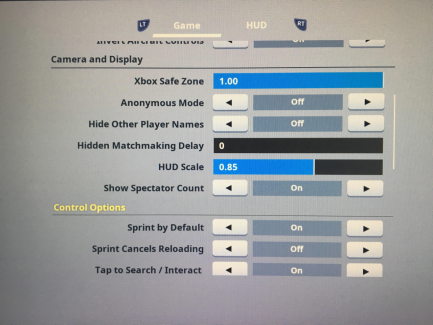

Age Verification and Parental Consent:

Implement tiered verification: Require self-reported age upon initial login. Trigger parental consent workflows (e.g., credit card verification, video authentication) for suspected child users (e.g., frequent account switching, small-value purchases).

Establish “Child Safety Mode”: Social features and in-app purchase permissions are disabled by default, accessible only after parental unlocking or verification.

Data Minimization and Retention Limits:

Collect only essential information (e.g., not mandating children's birthdays or geolocation) and automatically delete data upon parental request or account closure.

PART 3

COPPA Compliance Practical Dialogue

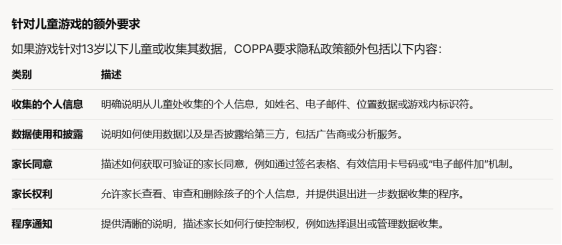

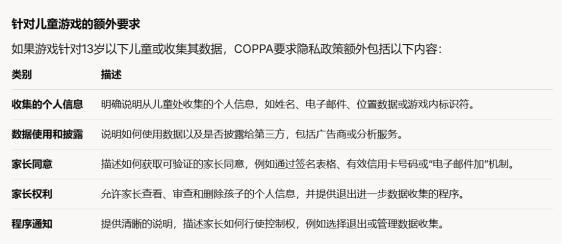

Children's Data Protection and Privacy Policy Development

The discussion primarily focused on data processing compliance issues, including rights fulfillment responses, user agreements, and privacy policies. Special attention was given to the protection of minors under the age of 13, specifically regarding data collection practices for children under 13 and disclosure requirements within privacy policies. Additionally, the process of parental consent was addressed, including how to collect parental personal information and associated data, as well as how to process and anonymize this data to safeguard user rights. Finally, the session explored drafting user agreements and privacy policies, along with page and interaction design considerations for products or websites.

User Privacy Protection and Compliance Requirements

User Privacy Protection and Compliance Requirements

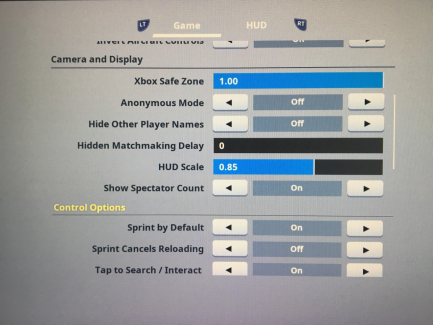

The design of the user consent mechanism was discussed, with a recommendation to adopt a simple checkbox approach while ensuring a seamless user experience. On the backend, it is necessary to log the user's consent time, ID, IP address, and other relevant information. During product usage, multiple contact channels must be provided, such as email and online forms. Upon receiving user requests, identity verification must be conducted, such as email binding, sending verification codes, or providing game IDs. For minor users, guardian identity must be confirmed using government IDs, driver's licenses, etc. Response cycles should be processed within 45 days from receipt of complete request materials. Regarding data deletion, certain data must be retained for internal analysis and evidence preservation, including registration information, email addresses, phone numbers, and device identifiers.

User Age Verification Solutions and Regulatory Analysis

Based on COPPA regulations and related practices, mainstream verification methods include signed consent forms, paper or electronic payments, credit card or online payment systems, microtransactions, voice calls, and video conferencing. It is emphasized that the verification process should not collect additional personal information from adults to avoid increasing data compliance obligations. Additionally, some vendors utilize third-party service providers for age verification to manage their mandatory data compliance responsibilities.

Game User Authentication and Control Practices

Game User Authentication and Control Practices

First, the game registration page requires users to provide a clear date of birth to prevent the use of real names and mitigate malicious incidents such as cyberbullying. Second, parental control features allow guardians to link their email addresses to accounts, enabling oversight of minors' in-game spending and social interactions. Finally, for age verification, the game employs an age selector wheel to require players to provide an accurate birthdate. Dynamic verification codes are sent to ensure parents or guardians retain control over the actions of their supervised users.

Compliance Outlook: Content Compliance and User Age Verification in Social Products

Compliance Outlook: Content Compliance and User Age Verification in Social Products

Social products face challenges in content compliance governance related to underage users accessing potentially problematic content, such as implicitly sexual material. The current industry standard practice in social platforms is to require users to proactively input their age information to verify if they are at least 18 years old. Users under 18 can register by submitting identity verification documents. Additionally, facial recognition technology commonly used in social products can identify minors and suspend their accounts. Real-name authentication is another prevalent verification method. Compared to mainland China, overseas markets have relatively lower age verification requirements, favoring more encouraging or neutral verification approaches. With the advancement of AI technology, more companies may offer age recognition services in the future, providing additional support for social products.

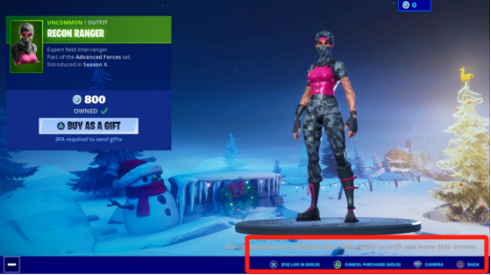

Deceptive Design and Dark Patterns in Game Design

Deceptive Design and Dark Patterns in Game Design

Deceptive design encompasses misleading cases and confusion tactics that induce players into non-resource or impulse purchases. Dark patterns refer to unfair and deceptive commercial practices as defined by Section 5 of the FTC Act, such as false in-game countdown timers for limited-time offers or limited-quantity item purchases. Additionally, in game advertising, using real-person KOLs to promote gameplay interfaces while the actual player experience significantly differs from promotional materials may constitute consumer fraud.

Social Features and Compliance Requirements for Minor Protection

It is recommended that high-risk social features be disabled by default for minor users, requiring explicit opt-in authorization. Concurrently, parents should retain the right to review communication activities involving minor users.

Compliance Analysis of Prize Drawings

Compliance Analysis of Prize Drawings

When conducting online or offline prize drawings, compliance with consumer protection laws is mandatory. Activity rules must be transparent, and false advertising (such as implying purchases increase winning odds) must be avoided. Second, prioritize child protection by implementing compliance measures for minor users to prevent access to mechanics like loot box-style prize draws. For offline sweepstakes specifically, note that U.S. states have varying regulations—such as registration requirements in Florida and New York, and monetary thresholds in Rhode Island. Therefore, primarily conduct sweepstakes through online channels (e.g., social media shares) to reduce compliance costs.

游戏用户身份验证与控制实践

游戏用户身份验证与控制实践 合规展望:社交产品内容合规与用户年龄验证

合规展望:社交产品内容合规与用户年龄验证 游戏设计中的诱导性设计与暗黑模式

游戏设计中的诱导性设计与暗黑模式 社交功能与未成年人保护的合规要求

社交功能与未成年人保护的合规要求 抽奖活动合规性分析

抽奖活动合规性分析