The First Descendant has faced intense criticism for using AI-generated influencers in TikTok ads to promote its product, as well as deepfaking original video content without the knowledge or consent of the actual hosts.

While the TikTok ads appear to feature genuine streamers promoting The First Descendant, closer inspection reveals unnatural-sounding voices, awkward lip-syncing mismatched with scripted dialogue, and peculiar head movements. Take the deepfake video of horror game streamer DanieltheDemon, for instance, where he discusses playing and endorsing the game. However, DanieltheDemon later clarified that he has no affiliation with the game or the advertisement. DanieltheDemon's most popular TikTok video, which has garnered 8.3 million views, shows him playing the indie horror game The Guest. The fake advertisement appears to have extracted a clip from this video, mirror-flipped it, used AI to alter his lip movements and speech, and then spliced in footage from a completely different game to make it seem like he was promoting it.

Following the public outcry, NEXON, the developer of The First Descendant, promptly issued a statement clarifying that such advertisements were part of a marketing campaign for the game's third season. The company launched a creative challenge program targeting TikTok creators, allowing them to voluntarily submit their content for use as advertising material. All submitted videos undergo verification through TikTok's system to check for copyright infringement before being approved as advertising content. However, the production environment of the controversial ad in question appears to have involved improper practices. Consequently, NEXON is conducting a thorough joint investigation with TikTok to ascertain the facts.

Following the public outcry, NEXON, the developer of The First Descendant, promptly issued a statement clarifying that such advertisements were part of a marketing campaign for the game's third season. The company launched a creative challenge program targeting TikTok creators, allowing them to voluntarily submit their content for use as advertising material. All submitted videos undergo verification through TikTok's system to check for copyright infringement before being approved as advertising content. However, the production environment of the controversial ad in question appears to have involved improper practices. Consequently, NEXON is conducting a thorough joint investigation with TikTok to ascertain the facts.

Clearly, with the continuous advancement of AI technology, the ability to plagiarize or blend artistic styles is no longer rare. Significant progress has also been made in deepfakes and generative art. People can now easily use specific AI tools to generate dynamic, continuous sequences from static works to meet their needs. The root cause of NEXON's controversial advertisement lies in the improper use of AI technology following its enhanced capabilities.

To address challenges posed by generative AI, South Korea's “Basic Act on Artificial Intelligence” was enacted in December 2024. It mandates transparency obligations for AI service providers, including requiring operators of generative AI services to inform users when content is AI-generated and to apply labels or watermarks to deepfake content that may be mistaken for reality. Non-compliance may incur administrative fines of up to 30 million KRW.

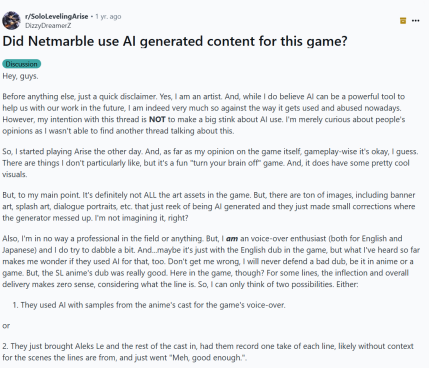

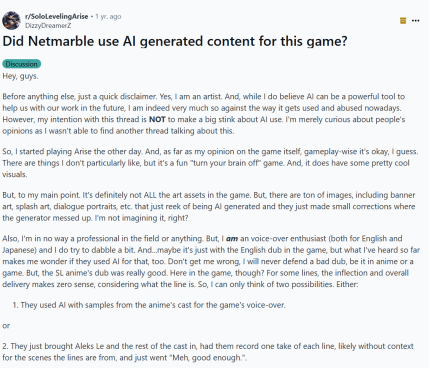

NEXON's controversy is not an isolated case. For instance, fellow South Korean gaming giant Netmarble faced heightened player skepticism when launching a new title based on the globally popular IP “I Level Up Alone.” After the game's release, numerous players posted on social platforms like Reddit, pointing out that many in-game art assets—including character portraits and cutscene illustrations—exhibited clear signs of AI generation, such as inconsistent detail handling, blurred texture blending, and illogical linework.

Although Netmarble has not issued an official response, this series of questions itself reflects a deeper issue. A single confirmed instance of improper AI use can inflict long-term reputational damage on a company. This leads player communities to view all subsequent releases from the company through a biased lens, proactively conducting “AI audits.” This community-driven, ongoing scrutiny means that even when companies use AI tools within regulatory boundaries, they face significant reputational risks. This demonstrates that in the AI era, while winning legal battles is crucial, maintaining community trust may prove a more arduous and enduring challenge.

Although Netmarble has not issued an official response, this series of questions itself reflects a deeper issue. A single confirmed instance of improper AI use can inflict long-term reputational damage on a company. This leads player communities to view all subsequent releases from the company through a biased lens, proactively conducting “AI audits.” This community-driven, ongoing scrutiny means that even when companies use AI tools within regulatory boundaries, they face significant reputational risks. This demonstrates that in the AI era, while winning legal battles is crucial, maintaining community trust may prove a more arduous and enduring challenge.

Clearly, embracing AI is an inevitable path to enhancing R&D efficiency and aiding creative processes. However, to mitigate legal risks, gaming industry participants are advised to adopt the following strategic measures to ensure reasonable use of AIGC technology while protecting themselves from negative public sentiment and legal exposure:

1. Establish “Explicit Consent” as the Foundation: Before using any individual's likeness, voice, or other identifying characteristics to train or generate AI content, obtain their explicit, informed, and comprehensive authorization. Standard model release agreements may be insufficient for AI applications. Contracts should explicitly mention terms like “artificial intelligence,” “deepfakes,” and “synthetic generation,” clearly defining the scope and duration of use.

2. Proactive Transparency and Mandatory Labeling: Implement clear, prominent watermarking or labeling systems across all marketing materials and game content utilizing AIGC technology. This not only complies with global legal standards but also effectively mitigates accusations of “deception,” fostering user trust.

3. Establish and Enforce a Robust Internal AI Ethics Framework: Companies should develop explicit internal AI ethics guidelines covering data source legitimacy, algorithmic fairness, application transparency, and respect for creator rights. This framework not only supplements legal compliance but also serves as critical evidence demonstrating the company's “duty of care” in the event of disputes.

4. Strengthen due diligence for third-party suppliers: For activities involving third parties or user-generated content, such as NEXON's “Creative Challenge,” reliance on platform-level reviews alone is insufficient. Companies must implement rigorous due diligence processes to ensure all marketing partners and content-submitting users adhere to corporate standards regarding licensing, intellectual property, and ethics.

舆情发生后,《第一后裔》开发商NEXON立刻发布声明称,此类广告系《第一后裔》第三赛季的营销活动,面向 TikTok 创作者开展了一项创意挑战计划,允许创作者自愿提交其内容作为广告素材。所有提交的视频都会通过 TikTok 的系统进行验证,以检查是否存在版权侵权,之后才能批准为广告内容。而前述争议广告的制作环境似乎存在不当之处。因此,NEXON正在与TikTok进行彻底的联合调查,以确定事实。

舆情发生后,《第一后裔》开发商NEXON立刻发布声明称,此类广告系《第一后裔》第三赛季的营销活动,面向 TikTok 创作者开展了一项创意挑战计划,允许创作者自愿提交其内容作为广告素材。所有提交的视频都会通过 TikTok 的系统进行验证,以检查是否存在版权侵权,之后才能批准为广告内容。而前述争议广告的制作环境似乎存在不当之处。因此,NEXON正在与TikTok进行彻底的联合调查,以确定事实。 尽管Netmarble未就此作出官方回应,但这一系列质疑本身就反映了一个更深层次的问题。一次被证实的AI不当使用,会给公司带来长期的“声誉负债”。这导致玩家社区在面对该公司后续所有作品时,都会戴上有色眼镜,主动进行“AI鉴定”。这种由社区驱动的、持续的审视,使得公司即便在合规范围内使用AI工具,也面临着巨大的舆论风险。这表明,在AI时代,赢得法律诉讼固然重要,但维护社区的信任可能是一项更艰巨、更持久的挑战。

尽管Netmarble未就此作出官方回应,但这一系列质疑本身就反映了一个更深层次的问题。一次被证实的AI不当使用,会给公司带来长期的“声誉负债”。这导致玩家社区在面对该公司后续所有作品时,都会戴上有色眼镜,主动进行“AI鉴定”。这种由社区驱动的、持续的审视,使得公司即便在合规范围内使用AI工具,也面临着巨大的舆论风险。这表明,在AI时代,赢得法律诉讼固然重要,但维护社区的信任可能是一项更艰巨、更持久的挑战。