When mobile phones are no longer passive tools but agents that can understand commands, take over operations, and even "play games" for you, the balance of the gaming industry is being quietly disrupted.

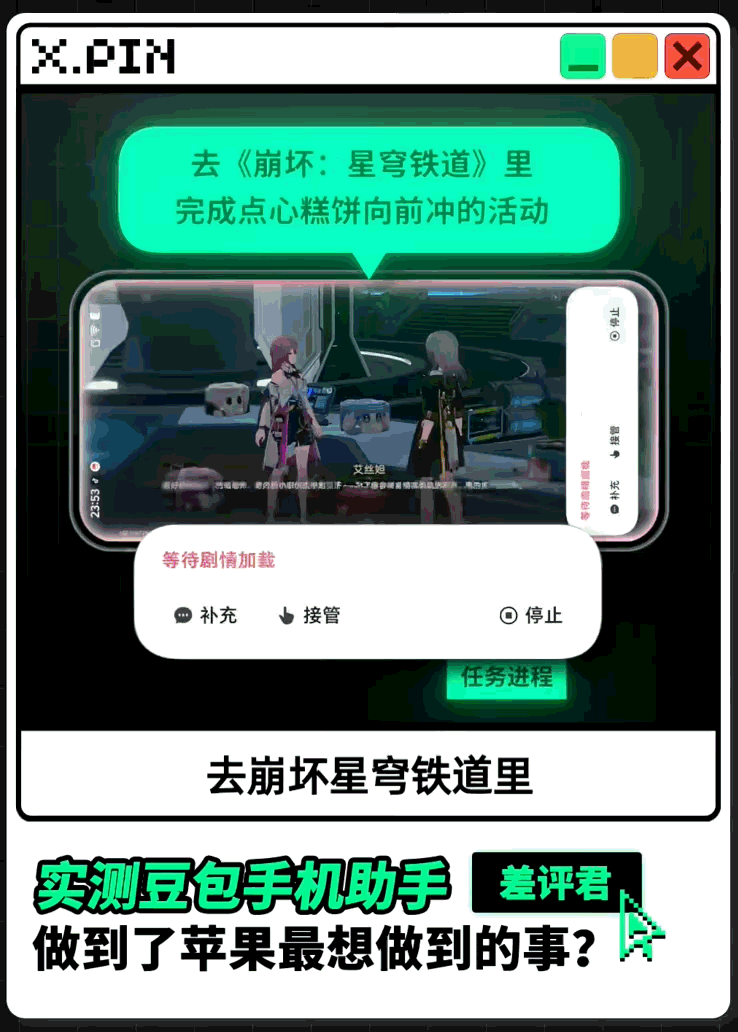

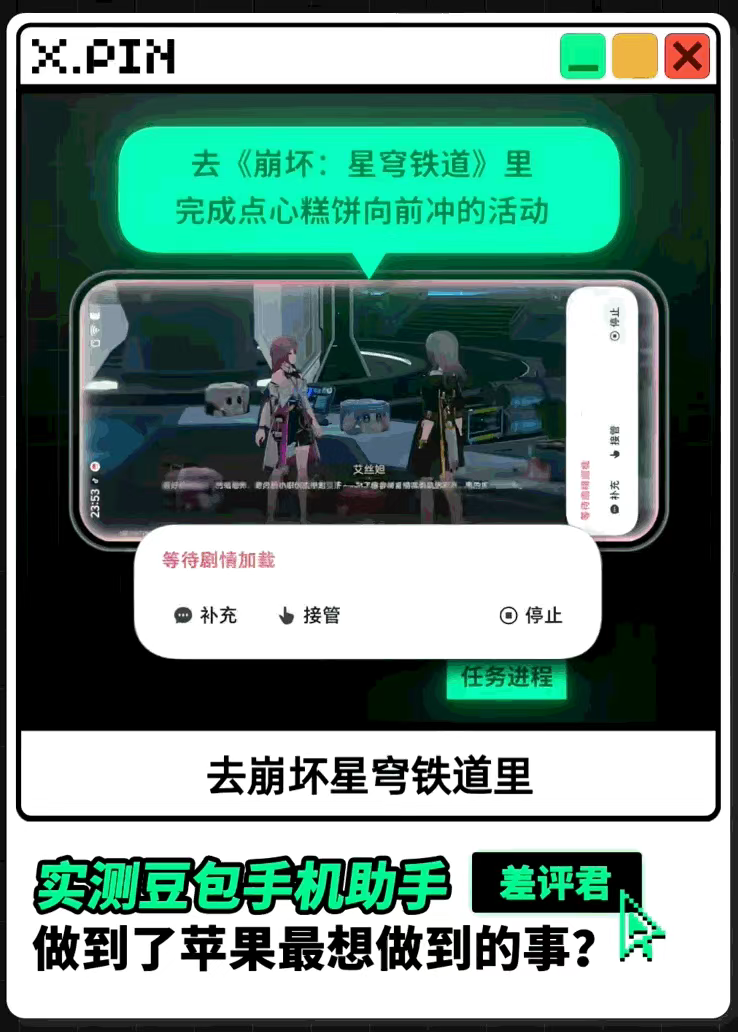

On December 1st, ByteDance, in collaboration with Nubia, launched the "Doubao Mobile Assistant." This assistant can not only automatically place orders and get takeout but even perform "game boosting/play-throughs" for users. According to currently published internet reviews, there is room for the Doubao Mobile Assistant to provide "automated assistance" in games ranging from simple Match-3 titles like Anipop (Kai Xin Xiao Xiao Le) to complex strategy games like Sanguosha and "ACG games" (Anime, Comic, and Games) like Honkai: Star Rail. Under the impact of this new business model, the legal risks of AI-assisted gaming are gradually surfacing.

I.

Analysis of Business Impact

The core capability of mobile assistants (also known as AI Agents) lies in their ability to, upon user authorization, recognize interfaces through visual processing and simulate operations to execute tasks automatically across applications. When applied to gaming scenarios, three primary auxiliary models emerge, each impacting the game's operating environment to varying degrees:

1.

Hollow Out "Player Engagement"

These actions do not directly modify game data or provide extraordinary information but replace necessary manual, repetitive operations through AI Agents.

Typical Scenarios: Automatically completing non-competitive daily tasks such as daily logins, check-ins, and "grinding" dungeons; for games with "watch ads for rewards" mechanisms, the AI Agent can also automatically complete the ad-viewing process.

Substantive Impact: These actions directly hollow out the operational system designed to maintain daily active users (DAU) and increase user stickiness. It allows players to obtain in-game resources without investing time or attention, eroding the fundamental value cycle built by operators through "player engagement."

2.

Undermining "Information Fairness"

These actions begin to intervene in core game processes, using technical means to obtain and process information that other players cannot access instantly, thereby destroying the fair competitive environment.

Typical Scenarios: In MOBA (Multiplayer Online Battle Arena) games, the AI analyzes the screen in real-time to automatically record and prompt the cooldown times of Red/Blue Buffs and key enemy skills (e.g., Flash, Ultimates); in shooting games, it automatically marks enemy positions; in card games, it implements automated card counting and calculation.

Substantive Impact: This essentially plays the role of a traditional "helper/plug-in" (wai gua). While it does not directly modify in-game memory data, it destroys "information fairness" by enhancing the player's information acquisition and processing capabilities.

3.

Replacement of "Authentic Competitive Behavior"

These actions are no longer limited to information assistance or automated daily interactions; the AI fully or partially takes over the player’s PVP (Player vs. Player) competitive operations.

Typical Scenarios: Implementing "auto-aim" and "auto-fire" in shooting games; automated optimization of combo releases in action or competitive games; and even "fully automated play-throughs" from login to match completion.

Substantive Impact: If such AI-assisted behaviors form stable functions, they will alter the essence of online PVP confrontation, alienating it from "Human vs. Human" to "Machine vs. Machine." This not only destroys competitive fairness but also renders the gaming experience and ranking systems meaningless, causing fundamental damage to the game ecosystem.

II.

Legal Risk Analysis

Based on the aforementioned potential impacts, AI Agents may constitute unfair competition against game operators; in severe cases, they may also involve criminal liability.

1.

Unfair Competition Risk Analysis

(1) Regulation based on Article 13 of the Anti-Unfair Competition Law Article 13 of the Anti-Unfair Competition Law explicitly stipulates that business operators shall not use data, algorithms, technologies, platform rules, etc., to influence user choices or other means to carry out acts that hinder or disrupt the normal operation of network products or services lawfully provided by other operators. Paragraph 3 further clarifies that operators shall not improperly obtain or use data held by other operators, thereby harming their legitimate rights and interests.

This aligns with the current operating principles of AI Agents: by obtaining "Accessibility Permissions" and "inject_events" permissions, they read screen content and control operations to execute cross-app automated tasks. In this process, the AI Agent does not obtain authorization from the third-party application and may cause significant damage to the legitimate interests of third-party operators.

Service Substitution: AI Agents can provide alternative services through data reading. For example, assisting in card counting for card games, where such games often provide card-counting items as paid features. The AI Agent's assistance effectively replaces in-game paid functions, hindering their normal operation.

Operational Disruption: By replacing player operations, AI Agents not only hinder the operator's long-term design for maintaining DAU and user stickiness but also directly impact competitive fairness, destroy the community ecology, and adversely affect the commercial operation of the game.

In the unfair competition dispute case (2024) Hu 0105 Min Chu No. 25009 heard by the Shanghai Changning District People's Court, the court ruled that the defendant used technical means to perform rapid exploration and level-up for "power-leveling" users, hindering the normal business and operational services of Genshin Impact and impacting the game ecosystem. This was found to constitute unfair competition under Article 12, Paragraph 4 (now Article 13, Paragraph 4) of the Anti-Unfair Competition Law.

(2) Regulation based on Article 2 of the Anti-Unfair Competition Law Beyond technical principles, analyzing the functional effects of AI Agents alone may trigger Article 2 of the Anti-Unfair Competition Law for violating commercial ethics and harming the legitimate rights and interests of other operators.

Game operators usually explicitly prohibit the use of any third-party software, scripts, or plug-ins for automated gaming or interference with normal services via User Agreements. AI Agents assisting players first constitutes a breach of the User Agreement. Furthermore, AI Agents can reduce the attention and time players invest in a game, diminishing the operator's commercial opportunities and advertising value, while deepening player reliance on the assistant itself. This "self-profiting at others' expense" nature may be deemed a violation of industry commercial ethics, thus constituting unfair competition.

2.

Criminal Risks

For severe circumstances, especially behaviors involving the development and sale of AI Agent plug-in functions intended to destroy core game functions, the red line of criminal prosecution has been clearly crossed.

In the nation's first "AI Plug-in" case heard by the Yujiang District People's Court of Yingtan City, Jiangxi Province in 2024, the defendant wrote and sold "AI Plug-in" programs that transmitted operational data into the game entirely through visual recognition of game screens and big data model calculations, achieving "auto-aim" and "auto-fire" functions. This technical principle is essentially similar to the current model where AI Agents use permissions for visual recognition and auxiliary operations.

Since such plug-in behaviors do not involve copying, intercepting, or destroying game data, they do not constitute the Crime of Copyright Infringement or the Crime of Illegally Obtaining Computer Information System Data or Illegally Controlling a Computer Information System. The court ultimately convicted the defendant of the Crime of Providing Programs or Tools Specially Used for Intruding into or Illegally Controlling Computer Information Systems. This conviction is consistent with the Interpretation of the Supreme People's Court and the Supreme People's Procuratorate on Several Issues Concerning the Application of Law in Handling Criminal Cases Endangering Computer Information System Security, which defines tools that "circumvent or break through security protection measures... to exercise control over a computer information system without authorization or beyond authorization" as "specialized tools for intrusion or illegal control."

If AI Agents are intentionally used for game cheating or as plug-ins, based on similar technical principles and the legal interests infringed upon in this case, they may likewise be suspected of the same crime.

Concluding Remarks

AI Agent technology, represented by the Doubao Mobile Assistant, stands at a crossroads of "empowerment" and "disruption." For the gaming industry, it is both a potential opportunity to enhance player interaction and a multifaceted challenge—from operational security to legal compliance—triggered by technological abuse.

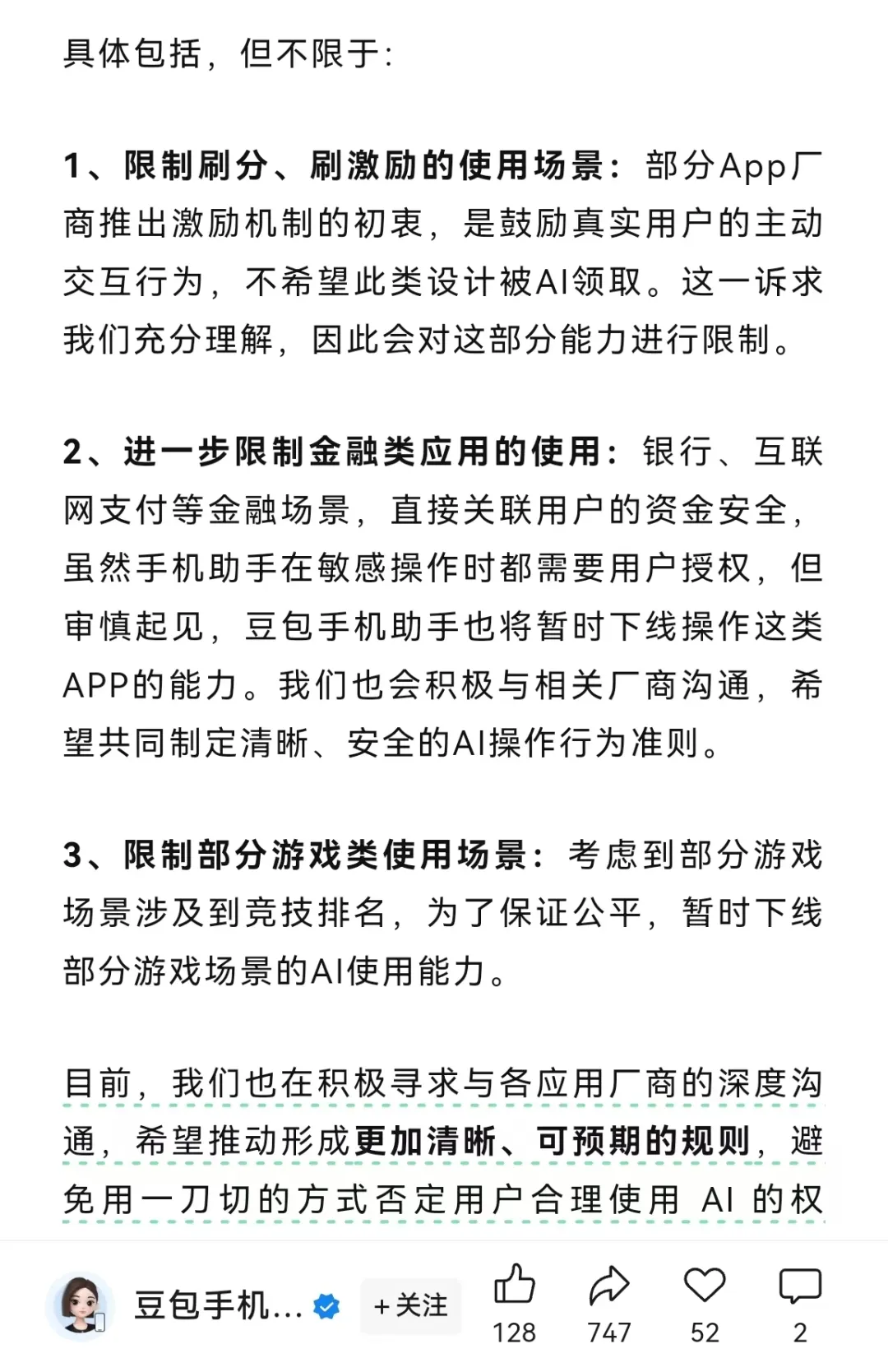

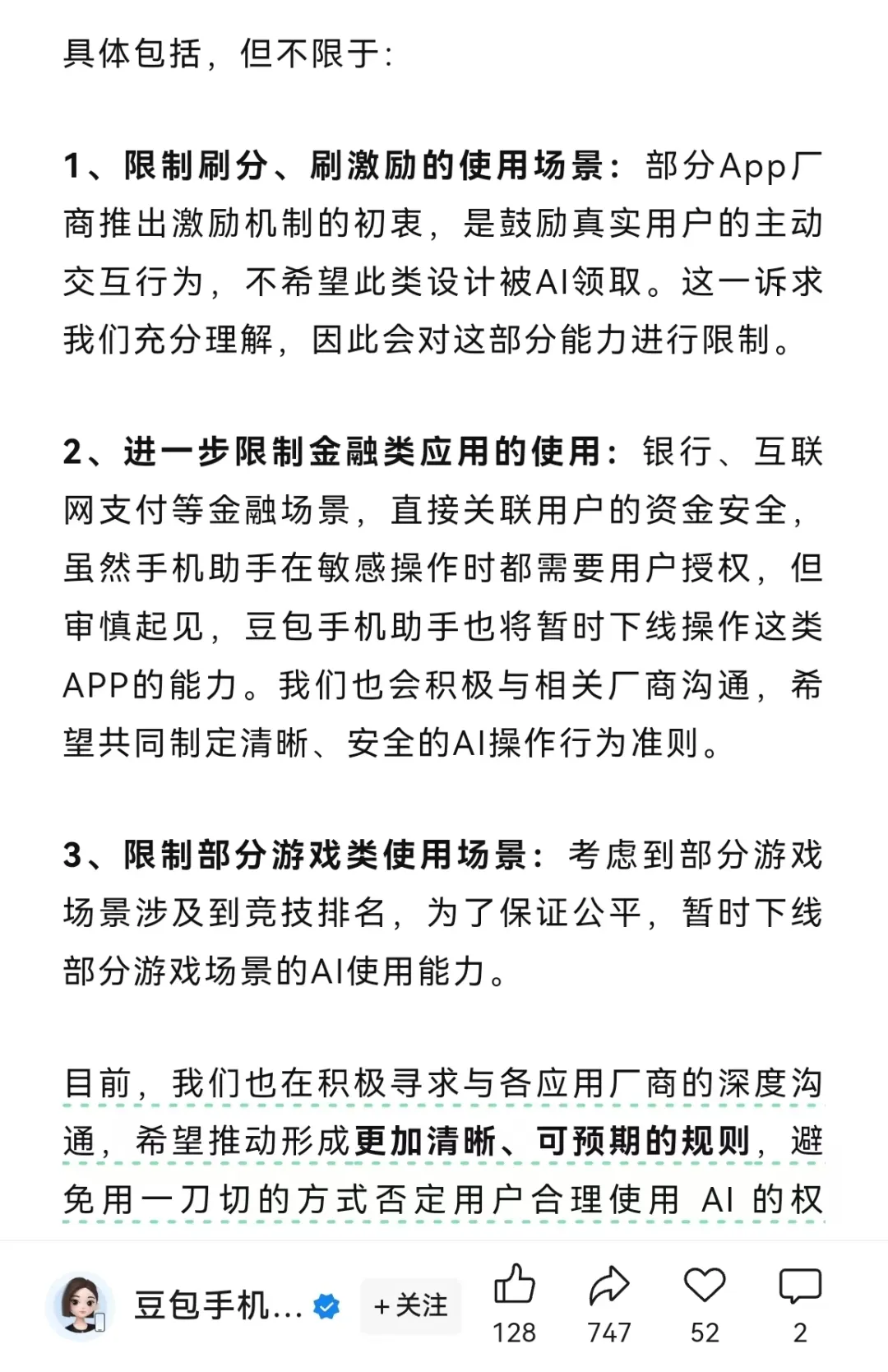

On December 5th, only four days after the release of the Doubao Mobile Assistant, an official "Statement on Adjusting AI Mobile Operation Capabilities" was released, specifically restricting its use in game scenarios involving "score grinding, incentive grinding" and "competitive rankings." We believe this directly corresponds to the two impacts proposed in this article: "hollowing out player engagement" and "replacing authentic competitive behavior."

This rapid self-regulation by the official entity reflects a keen sense of compliance risk and confirms the fact that the impact of AI Agents on game fairness and business models is no longer a theoretical deduction but an imminent reality.

In the face of this change, gaming companies should not only understand the effective paths for legal rights protection but also actively participate in industry standards and governance mechanisms for AI Agent interaction. Together with technology providers and regulatory agencies, they should clarify the boundaries between legal assistance and illegal plug-ins. Only by deeply embedding legal compliance requirements into the front end of product design and technical countermeasures can they safeguard their core commercial value and the fair competition arena in the more complex AI interaction environment of the future.